Christopher Nolan's perspective on the emerging AI threat

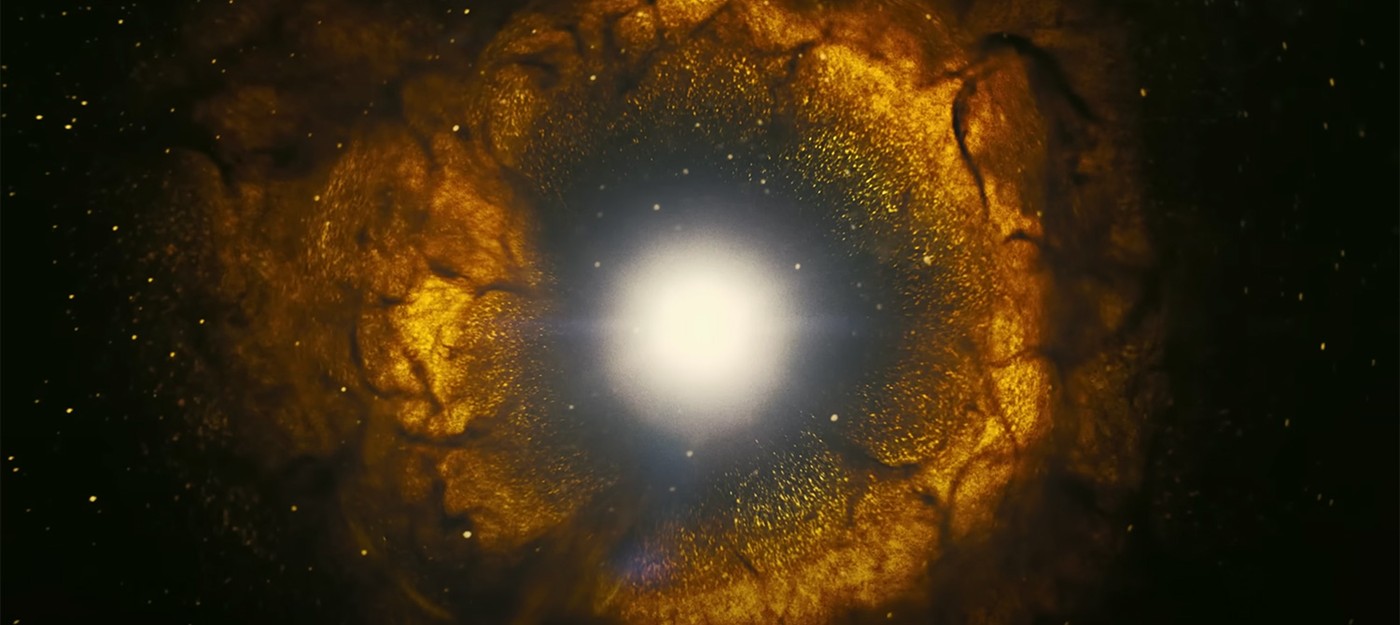

In a recent interview, renowned filmmaker Christopher Nolan drew a compelling analogy between the atomic bomb and the potential dangers posed by artificial intelligence (AI), reigniting a critical debate on the ethical implications of rapidly advancing technologies.

Speaking about the growing use of AI in weaponry, Nolan shared his perspective on the AI revolution, likening it to the introduction of the atomic bomb. While acknowledging a fundamental difference in the origins of these technologies – one being a fact of nature, the other, a man-made creation – he emphasized the shared potential for danger when such powerful technologies are "unthinkingly unleashed" on the world.

The growth of AI in terms of weapons systems and the problems that it is going to create have been very apparent for a lot of years. Few journalists bothered to write about it. Now that there’s a chatbot that can write an article for a local newspaper, suddenly it’s a crisis.

If we endorse the view that AI is all-powerful, we are endorsing the view that it can alleviate people of responsibility for their actions — militarily, socioeconomically, whatever. The biggest danger of AI is that we attribute these godlike characteristics to it and therefore let ourselves off the hook. I don’t know what the mythological underpinnings of this are, but throughout history there’s this tendency of human beings to create false idols, to mold something in our own image and then say we’ve got godlike powers because we did that.

I feel that AI can still be a very powerful tool for us. I’m optimistic about that. I really am. But we have to view it as a tool. The person who wields it still has to maintain responsibility for wielding that tool. If we accord AI the status of a human being, the way at some point legally we did with corporations, then yes, we’re going to have huge problems.

Nolan reflected on his upbringing in the UK during the 1980s, a time when the fear of nuclear war was palpable. He noted that while fears and societal concerns may shift over time, the potential danger posed by nuclear weapons never truly disappeared. Nolan suggested a parallel with AI, warning that complacency could make society blind to emerging threats.

The filmmaker expressed concern about the increasing integration of AI into weapon systems, which he believes has been largely ignored until recently. Nolan further criticized the tendency to attribute "godlike" characteristics to AI, suggesting that such views may be used as an excuse to evade responsibility for the consequences of AI deployment.

Nolan warned that we are approaching a "tipping point" where AI systems might eventually become capable of self-improvement, further complicating the ethical landscape. He stressed the danger of an "abdication of responsibility," cautioning that the rush to deploy advanced AI could lead to unforeseen consequences.

The need for regulation was a recurring theme in Nolan's discourse. He expressed skepticism towards tech companies' calls for regulation, suggesting that it might be a political maneuver to shift responsibility away from the creators of these technologies. This, he argued, poses a significant challenge since the technical complexities of AI make it difficult for elected officials to fully understand and effectively regulate the technology.

Drawing on the story of Oppenheimer, the father of the atomic bomb, Nolan emphasized the complex relationship between science and government. He suggested that the lessons learned from Oppenheimer's story could provide valuable insights into the current predicament surrounding AI regulation.

Nolan concluded by pointing out the complexities involved in navigating the AI landscape. He criticized tech inventors' disingenuous calls for regulation, suggesting that they bear a significant part of the responsibility for the potentially dangerous technologies they create.